2.1. Single Program, Multiple Data¶

This code forms the basis of all of the other examples that follow. It is the fundamental way we structure parallel programs today.

Program file: 00spmd.py

A helper Python program: There is a program that accompanies the examples in this book called run.py. It is designed to make it easier to run these examples if you are unfamiliar with using a program called mpirun, which ordinarily needs several command line arguments to run our examples correctly.

Example usage:

python run.py ./00spmd.py 4

Here the 4 signifies the number of processes to start up in mpi.

run.py executes this program within mpirun using the number of processes given.

Exercise:

Rerun, using varying numbers of processes, e.g. 2, 8, 16 (i.e., vary the last argument to run.py)

2.1.1. Dive into the code¶

Here is the heart of this program that illustrates the concept of a single program that uses multiple processes, each containing and producing its own small bit of data (in this case printing something about itself).

from mpi4py import MPI

def main():

comm = MPI.COMM_WORLD

id = comm.Get_rank() #number of the process running the code

numProcesses = comm.Get_size() #total number of processes running

myHostName = MPI.Get_processor_name() #machine name running the code

print("Greetings from process {} of {} on {}"\

.format(id, numProcesses, myHostName))

########## Run the main function

main()

Let’s look at each line in main() and the variables used.

comm The fundamental notion with this type of computing is a process running independently on the computer. With one single program like this, we can specify that we want to start several processes, each of which can communicate. The mechanism for communication is initialized when the program starts up, and the object that represents the means of using communication between processes is called MPI.COMM_WORLD, which we place in the variable comm.

id Every process can identify itself with a number. We get that number by asking comm for it using Get_rank().

numProcesses It is helpful to know haw many processes have started up, because this can be specified differently every time you run this type of program. Asking comm for it is done with Get_size().

myHostName When you run this code on a cluster of computers, it is sometimes useful to know which computer is running a certain piece of code. A particular computer is often called a ‘host’, which is why we call this variable myHostName, and get it by asking comm to provide it with Get_processor_name().

These four variables are often used in every MPI program. The first three are often needed for writing correct programs, and the fourth one is often used for debugging and analysis of where certain computations are running.

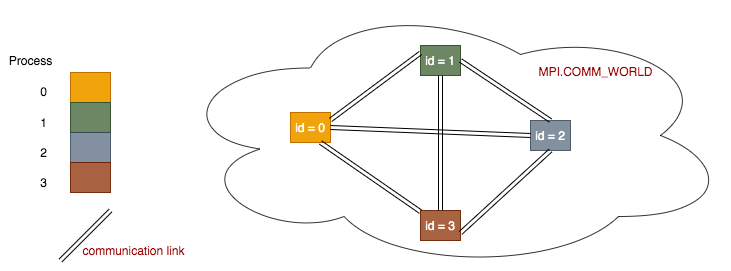

The fundamental idea of message passing programs can be illustrated like this:

Each process is set up within a communication network to be able to communicate with every other process via communication links. Each process is set up to have its own number, or id, which starts at 0.

Note

Each process holds its own copies of the above 4 data variables. So even though there is one single program, it is running multiple times in separate processes, each holding its own data values. This is the reason for the name of the pattern this code represents: single program, multiple data. The print line at the end of main() represents the multiple different data output being produced by each process.